Why Most AI Projects Never See Daylight

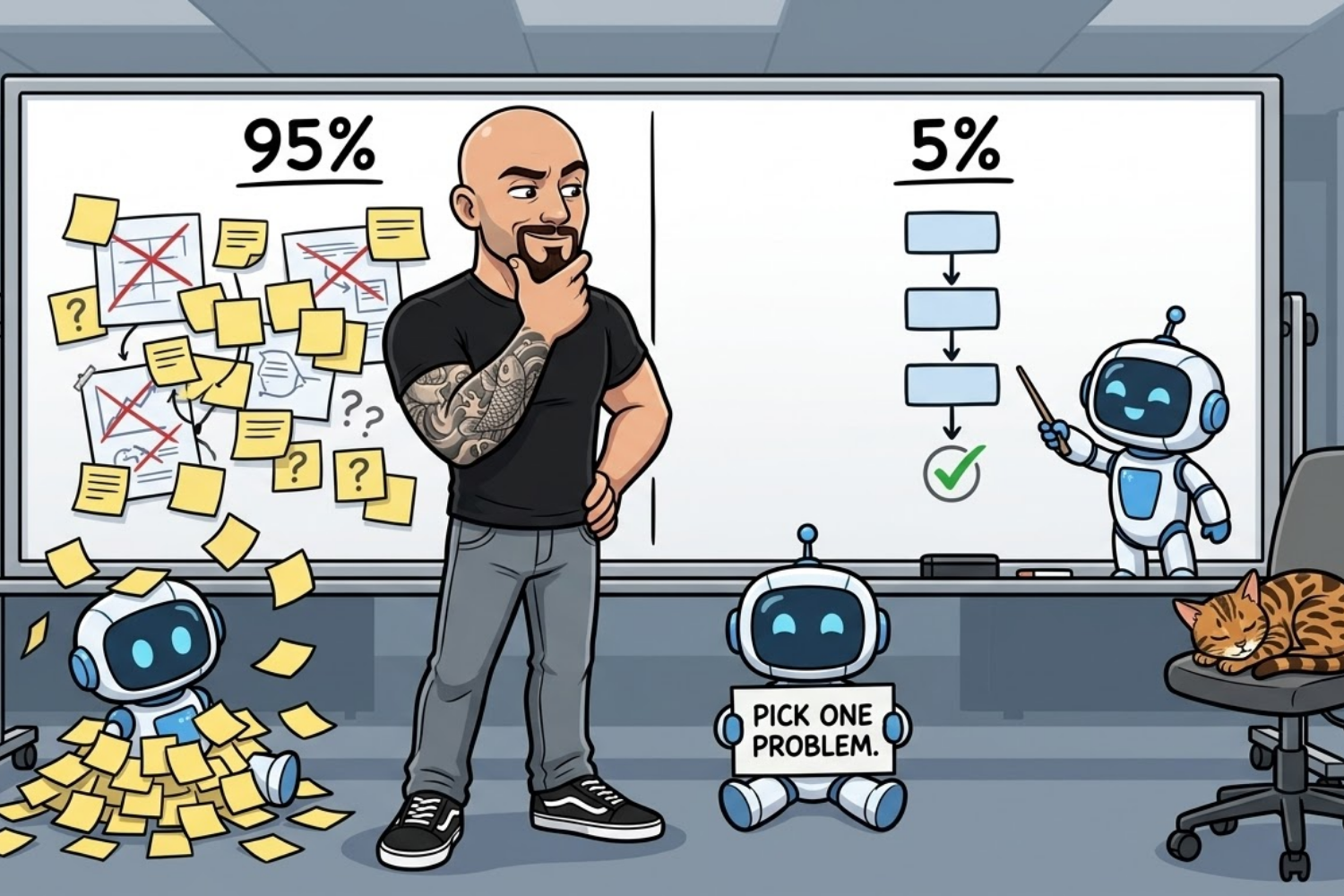

Here's a number that should ruin your morning: 95% of enterprise AI pilots produce zero measurable business impact. Not "underperformed." Not "needs more time." Zero.

That's from MIT's GenAI Divide report, which studied over 300 AI deployments, interviewed 52 executives, and surveyed 153 senior leaders. Their conclusion was blunt. The problem isn't bad models. It's bad decisions made before anyone writes a line of code.

Meanwhile, worldwide AI spending is expected to hit $2.5 trillion in 2026, according to Gartner. Budgets are climbing. NVIDIA's latest State of AI survey found 86% of companies plan to increase AI spending this year. And yet, most of that money will fund projects that never make it out of pilot.

That's not a technology problem. That's a leadership problem.

What The 5% Do Differently

The MIT research identified a clear pattern among the small group that actually ships AI into production. Three things separate them from the 95%.

First, they pick one problem. Not "transform the business with AI." One pain point. One workflow. One measurable outcome. The successful companies in the study chose narrow, boring targets like document processing, compliance checks, and internal operations. Not the flashy stuff. The stuff that actually costs money when it breaks.

Second, they buy before they build. This one surprised people. Companies that purchased AI tools from specialized vendors succeeded about twice as often as companies that tried to build everything in-house. MIT's lead author put it simply: almost everywhere they went, enterprises were trying to build their own tools. And the data showed purchased solutions delivered more reliable results.

Third, they push decisions down. The winning organizations didn't run AI adoption out of a central innovation lab. They empowered line managers - the people who actually understand the workflows - to drive it. When the people closest to the problem choose the tool, the tool is more likely to solve the problem.

Identifying the Real Opportunities

We see this pattern constantly at SPARK6. A company comes to us wanting to "add AI" to their product or operations. When we ask what problem they're solving, the room goes quiet.

That silence is expensive. It usually means six months of exploration, three vendor demos, a pilot that technically works but nobody uses, and a quiet pivot back to the old way of doing things.

The projects that actually ship start with a different sentence. Not "we need AI" but "we lose 40 hours a week to manual data entry" or "our support team answers the same 12 questions 200 times a month." That's a problem you can solve. And you can measure whether you solved it.

Three Questions Before You Greenlight Anything

If you're about to approve an AI project - or you're reviewing one that's stalled - run it through these three filters.

What is the one metric this project will move? If the answer is vague ("efficiency" or "innovation"), stop. Redefine the target until it fits on an index card.

Who owns this after launch? If the answer is "the AI team" or "IT," you have a pilot, not a product. The business owner of the workflow should own the AI tool that supports it.

Can we measure success in 90 days? If the timeline to prove value is "12-18 months," you're funding a research project, not a business initiative. The best AI deployments show measurable results within one quarter.

The Real Risk Isn't Spending Too Much

Gartner says AI is currently in the "Trough of Disillusionment." That's their way of saying we're past the hype and now we're in the hard part. Budgets are still going up, but expectations are finally getting realistic.

The risk right now isn't that you'll overspend on AI. It's that you'll spend on the wrong things. Three-quarters of CEOs surveyed by KPMG said generative AI may have been overhyped in the last year - but that its long-term impact is still underestimated. They're probably right on both counts.

The companies that win in 2026 won't be the ones that spent the most. They'll be the ones that spent on one clear problem, measured the result, and expanded from there.

That's not glamorous. But it's what works.

Find your next edge,

Eli

Want help applying this to your product or strategy? We’re ready when you are → Let's get started.